With the rise of AI models like ChatGPT, we have seen a growing interest in the question: How human is AI? Even more so, we’ve witnessed anxious concerns about the increasing replacement of human abilities with the potentially superior competence of AI. What’s more surprising, however, is that these questions and concerns are not new—they are hundreds of years old.

Philosophers like René Descartes, Thomas Hobbes, and Gottfried Wilhelm Leibniz, all impressed by the advances in the mechanical sciences, asserted that parts of the human mind—or in Hobbes' case, all of it—function like mechanisms. Similarly, Descartes argued that, in principle, it is possible that we could not differentiate humans from “automata.” And IT geeks who believe they live in a Matrix-like computer simulation will be happy to hear that Descartes argued it is indeed possible to live in such a simulation, though with a 17th-century Catholic “evil demon” instead of a computer.

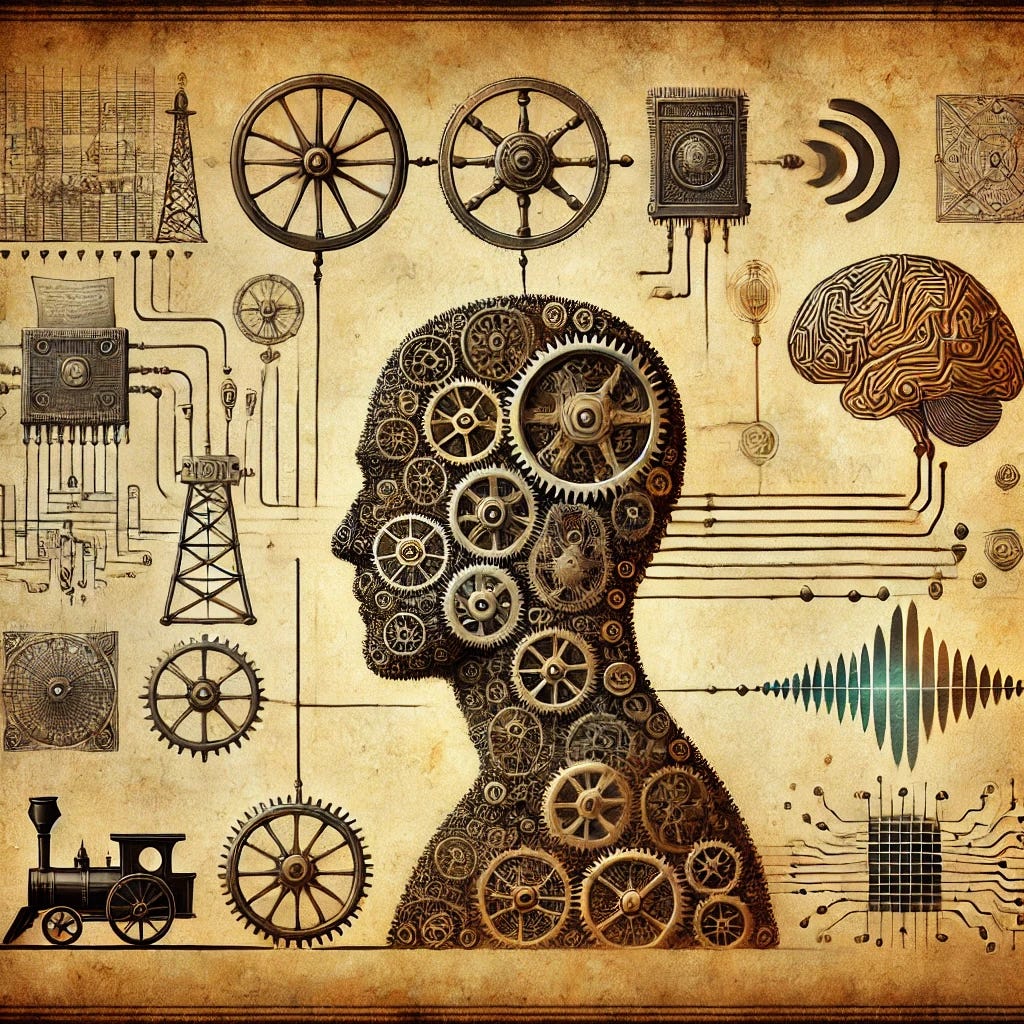

Of course, these evaluations were not based on modern AI models but on mechanical devices such as clocks operated with the help of cogwheels, the pumps of water fountains, and the sawing machines you found in paper mills—your 17th-century high-tech. Later thinkers such as Sigmund Freud, Hermann von Helmholtz, and William James likened the mind to the technology of their day, envisioning parts of human mentality analogous to telegraphs and telephone switchboards.

As actual AI—or rather, the humble beginnings of symbolic computation—began to emerge, pioneers like Alan Turing speculated that computers might one day rival the human mind. This was a bold claim, given that computers at the time were massive analog machines with very limited capabilities.

No less bold, with the onset of the “cognitive revolution” in psychology during the 1960s, philosophers and foundational figures of cognitive science like Hilary Putnam and Jerry Fodor—who wrote an entire book on how Descartes was right about psychology—confidently asserted that human minds were essentially computers. Remarkably, these assertions were made when computers, although advancing, were still large, cumbersome, partially based on plug boards and punch cards, less powerful, and, importantly, less “human” than an early 2000s smartphone.

This historical perspective should give us pause. It appears that the idea of comparing the human mind to the most advanced technology of the time is a recurring theme. Just as 17th-century thinkers saw the mind as a clockwork mechanism, today’s thinkers often see it as a computer.

Indeed, the late Berkeley philosopher Hubert Dreyfus, following eminent philosophers Edmund Husserl and Martin Heidegger, warned that our understanding of both humans and AI is not so much based on actual reality and our empirical, scientific understanding of it. Instead, cultural assumptions about what it means to be human and the fascination of philosophers with the idea that everything can be described as a mechanism guide our thinking.

This is not confined to our understanding of humanity and AI. We have inherited the scientific, rationalist ideology that emerged in the 17th century, which assumes that everything can be reduced to mechanisms. Be it in economics, where we believe that everything can be reduced to market behavior, or in psychology, where researchers seriously argued that everything can be reduced mechanically to stimulus and response and later to sensory input and behavioral output with computation in the middle.

While this reductionist account of mechanism might have been instrumentally advantageous up until recently in physics and chemistry, it has done harm everywhere else. Even in physics, researchers have come to reject such mechanistic reduction and instead work with the assumption of complexity.

That means there is a serious possibility that our concerns about how human AI is are primarily based on 17th-century philosophical ideology: just a logical consequence of not necessarily justified ideas of reduction and mechanism. Mere assumptions and conjectures about nature and science have, over the centuries, become basic assumptions about reality itself. This allowed us not only to conceive of computers as human-like but also to see humans as continuous with AI, turning this idea into a self-fulfilling prophecy.

While there is no doubt about how impressive modern AI is, and while it is certainly not unimaginable that human work will be replaced by AI, it is important to keep in mind that this does not mean that AI is human or that humans are similar to AI. Modern factory machines are still impressive and have replaced many forms of human labor. Yet, from this, it does not follow that factory machines are human either.

“Human” is not a performance attribute or value judgment—though it has been widely used in this way to differentiate us from animals. Rather, “human” refers to how we are. Machines might be or might become superior to us in many tasks that we deemed uniquely human, but that is not the same as being human.

If you are interested in my research, please consider visiting alexjeuk.com.

© 2024 Alexander Jeuk for the text. For the image see the caption.